The dream of fully autonomous vehicles is slowly becoming closer to reality, but obstacles still litter the way to commercialization. One such example is adverse weather conditions, e.g., fog, rain, and snow, which substantially degrade visibility, and consequently performance of visual recognition systems of autonomous vehicles. Since safety is a critical feature of autonomous vehicles, their visual perception systems must be robust against these impediments.

Professor Suha Kwak of the Department of Computer Science and Engineering (CSE), Sohyun Lee (GSAI Ph.D/M.S. Candidate, advisor Prof. Suha Kwak), and Taeyoung Son (CSE M.S.) developed an artificial intelligence (AI) model for visual recognition that can accurately identify predefined object classes such as people, cars, roads, and trees even in dense fog. This model robustly handles conditions in which previous techniques were known to fail and enables a step further towards the commercialization of autonomous cars.

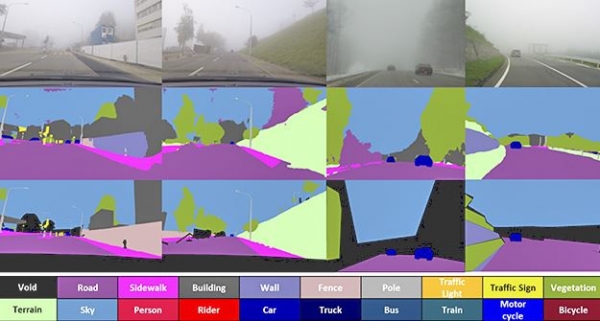

The research team made the breakthrough in visual recognition by regarding the fog condition of an image as its style and making the AI model learn image representations that are insensitive to variations of the fog conditions. The team achieved this by developing and utilizing a fog-pass filter that extracts information only on the fog condition. An adversarial learning process using this information along with the image representation allowed the model to extract information independent of the fog condition. This resulted in a system that greatly increases recognition accuracy in real-world foggy images; it even generalized well to other adverse weather conditions such as rain and frost.

This research was supported by the Samsung Science and Technology Foundation and was selected as an oral presentation paper at CVPR (IEEE/CVF Conference on Computer Vision and Pattern Recognition) 2022, a top AI international conference that will be held in June. Oral presentation opportunities are only given to few excellent papers.